Between 6 and 24: The Neuroscience of Why Your Last Training Sucked

PART 1: THE LIE

You've been lied to about how learning works.

It’s a lie of omission. Or better said, it’s a lie of inertia, but a lie regardless.

The lie comes in two equally defective variations:

Variation One: "Scale is democratization."

This is the MOOC promise. (Massive Open Online Courses).

COVID hit. Live training vanished. Corporations cheered as they moved training “Online.”

Udemy, Coursera, and YouTube University exploded with hundreds of "Ultimate Masterclasses." They have 4.2-star ratings and boast 4,847 enrolled students

The pitch is seductive: "Learn anything, anywhere, with thousands of peers! Education for the masses!"

What you actually get: 61 videos no one finishes, a forum where three people argue whether the instructor said "webhook" or "web hook," and a certificate that signals exactly one thing—you paid $47 for watching 67 minutes of content before life got in the way.

Completion rates for MOOCs are ~8%.

That's not a typo.

But hey, at least the training “scaled” and Corporations could cut their training budget by 85%.

Variation Two: "Intimacy is mastery."

$5,000 for 1-on-1 mentorship. You get "personalized attention" and "customized curriculum."

The Pitch = "Large group courses are for peasants. Real learning happens in private."

What you actually get: expensive hand-holding where the coach thinks for you, maybe.

Even with the pre-sale claims of endless availability, the head guru is curiously unresponsive, and the people you interact with are employees, who may or may not be on staff next month.

One-on-one teaching has its place. For example, it’s essential when the educator is helping the student work through their specific cognitive block.

But as a primary learning methodology? No.

You're paying top dollar to deprive yourself of the most powerful learning mechanism humans have: watching other people struggle with the same problem from different angles.

Here is the defect in both models: The number of participants.

When we build new knowledge, humans first investigate how others built their mental models. We need a cross-section. The ideal setting for mastering complex skills is neither the largest nor the smallest possible environment.

The number is between 8 and 24 people.

Why that range?

This aligns with your brain's architectural capacity and the boundaries of social dynamics: between intellectual safety and social contribution.

PART 2: THE NEUROSCIENCE RECEIPTS

Why 247 People on a Webinar Is Educational Theater, Not Learning

When you join a webinar with hundreds of participants, something predictable happens: The chat interface fills up with shoutouts and side conversations that are, at best, just rude. Anything valuable gets buried in the noise. And if you try to read the valuable comments, you suddenly can’t hear the speaker.

No, this is not a sudden loss of hearing; this is directly tied to the brain’s modality architecture. We can read. Or we can listen. Pick one.

So, husbands, the next time you are reading something and your wife says, “Didn’t you hear me?” The correct, and unapologetic answer is, “No, God intended for me to ignore you when I’m reading because my hearing no longer works. It’s brain science, sweetheart.”

Uh . . .

Er . . .

Ok, maybe don’t say that. But the point is made. You cannot read and listen at the same time.

And if the facilitator dares to let people actually unmute and ask questions, unless they are excellent facilitators, within minutes, 2 people monopolize 70% of the discussion.

We’ve all experienced the same MOOC phenomenon. By the time the event hits triple-digit attendance, you are not in a learning environment—you have an audience watching extroverts perform. Everyone else is digital wallpaper.

Now let’s talk about facilitators.

Dear Reader, meet Dunbar's number.

Humans can maintain social relationships with roughly 150 people, but the number for deep cognitive tracking—the ability to understand someone's current mental model, predict their misconceptions, and adjust explanation in real-time—maxes out around 20+/- people.

The AMEE Guide on effective small-group learning identifies 6-12 as optimal for tutorial-style teaching, noting that beyond 20+, facilitators hit individual limits in their ability to probe individual schemas through open questioning.

We could list an entire bibliography supporting this limitation of effective learning, but this is the bottom line. The facilitator's role in a 247-person webinar is not teaching; they're broadcasting.

They have no idea whether you're building understanding or just nodding along. They can't see when you're confused. They can't catch the moment where your mental model diverges from reality. They're talking at the aggregate, not with individuals.

Okay, so big groups don't work. What about tiny ones?

Here's where the neuroscience gets into the weeds. The temptation is to turn this article into an academic paper. We’ll add some reference material at the end, but for now, accept this summary.

Your brain learns by observing diverse approaches to the same problem. This taps into mirror neuron systems—watching someone else wrestle through a challenge literally lights up similar neural patterns in your own head.

But here's the critical piece: you need exposure to enough different approaches to build a robust mental model.

Research on small-group learning (including guides used in medical and professional training) shows that you hit a sweet spot around 6+ participants. Below that, groups often fall into what researchers describe as premature convergence—they lock onto the first decent idea because there simply aren't enough alternative viewpoints to create real friction.

Studies on collaborative work repeatedly find that tiny groups of three to five settle too fast and miss deeper learning, while 6+ reliably surface more distinct mental models—even when the total talking time stays the same. The difference isn't "less airtime per person." It's when the group exposes enough varied “blueprints” that real learning begins.

Here's what this looks like in practice:

3-person group discussing automation workflows:

Person A: "I'd use a webhook trigger."

Person B: "Yeah, that makes sense."

Person C: "Agreed, webhooks are the way."

Convergence achieved in 30 seconds. But did anyone actually learn anything? Or did they just confirm their shared assumption?

7-person group, same challenge but different outcome:

Person A: "Webhook trigger."

Person B: "I was thinking form submission."

Person C: "Could you use a calendar booking?"

Person D: "Wait, what's the difference between those triggers?"

Person E: "I tried webhooks last week and couldn't get them working."

Person F: "That's because webhooks require the receiving platform to listen—forms don't."

Person G: "So the trigger choice depends on which platform initiates the data flow?"

Now we're learning. Multiple approaches surfaced. A knowledge gap was revealed (Person D). A past failure was examined (Person E). An underlying principle was discovered (Person G).

This is why groups of -5 struggle: there isn't enough cognitive diversity to guarantee productive disagreement. And learning happens in the friction between different mental models, not in premature agreement.

The second problem with tiny groups: psychological exposure.

In a 3-person cohort, if you ask a “dumb” question, 100% of your peers hear it. The social cost feels enormous—especially for executives and business owners whose professional reputations are valuable assets.

Adults already carry a healthy dose of fear about looking incompetent (a well-documented dispositional barrier in adult learning). In a tiny group, fear gets magnified because there’s no social diffusion of the risk—every eye is on you.

But in a 6+ group, your question diffuses across more people. There’s statistical safety: someone else probably had the same question. And indeed, in our cohorts, we see this pattern repeatedly—one person finally asks the “stupid” question, and you can watch half the group exhale and relax because they were wondering the same thing.

So why do we set our minimum at 8 instead of 6?

Because life happens: We’ve accepted cohorts of 6 and inevitably a kid gets sick, there is a client emergency, the dog ate the homework, bla bla bla. If 2 drops, we are below the threshold. Starting at 8 provides a buffer that maintains critical mass even when life happens.

Just to be clear, we don’t want any to drop, and if you participate in one of our cohorts, missing the engagement is a problem, but our lower bound of 8 is a strategic choice.

The Goldilocks Zone: 8-24 Is Where Learning Happens

Between 8 and 24 people (with a sweet spot around 15+/-), you get three things simultaneously:

Enough diversity to challenge assumptions

Few enough people for genuine tracking by the facilitator

The social dynamics that make peer teaching inevitable

Schema Revelation Through Dialogue

Schemas are how you think about stuff. It is the structure, or the scaffolding, of how you organize concepts.

Schemas are cognitive blueprints, organized mental frameworks developed from past experiences. They efficiently structure our knowledge, guiding how we perceive, interpret, and recall new information to make sense of the world.

For example, when you read about a restaurant experience, your "restaurant schema" provides the blueprint: entrance → host → table → menu → order → eat → pay.

This blueprint has empty slots that fill with specifics (Italian restaurant, waiter named Marco, pasta dish). You don't need every detail explained because your blueprint provides the structure, allowing you to fill gaps and make predictions about what happens next.

New information activates your brain's existing schemas, providing context and meaning. These mental structures hold interconnected concepts, expectations, and organized knowledge.

Schemas operate through key processes:

construction (building new frameworks)

activation (bringing relevant knowledge online),

assimilation (fitting new information into existing structures),

accommodation (modifying schemas when new information doesn't fit).

Schemas guide attention, comprehension, and information encoding/retrieval, influencing learning and memory.

Now, with this new information added to your schema, let’s consider what happens when you watch your favorite SaaS guru’s feature function tutorial.

They click through the process with effortless precision, and like magic, the SaaS thingy they are building appears on the screen.

But what is missing?

You know the answer. The logic behind their actions is invisible. You don’t see the thinking that produced the outcome. This is a learning challenge that every YouTube university student has experienced.

And it only gets worse.

Because you had no exposure to the foundational blueprint, your own thinking remained invisible.

WHAT did you understand?

(Shrug)

WHY did you understand it?

(Shrug)

What DIDN’T you understand and WHY DIDN’T you understand it?

(Double Shrug)

You may have paused the video, trying to replicate what you saw: click, click, click.

If you were fortunate, it worked exactly like it did in the video, but you came back the next day and couldn’t replicate it to save your life. You couldn’t remember the workflow. You couldn’t remember the endless caveats and addenda that the guru made look seamless.

Why?

You duplicated a process, but you did not gain schema. You didn’t build any mental structures to review. Your brain could not recall what it watched because no memory structure was constructed.

The lightbulb just went on for many people reading this.

You remember the training that didn’t last longer than 24 hours.

Now you know why.

Now that you understand Schema, you can understand the power of small group learning

Small group facilitation inverts this dynamic.

A well-designed cohort of 8 to 24 individuals has an array of blueprints filled with mental structures, interconnected concepts, and organized knowledge.

The cohort is led by an outstanding facilitator whose chief responsibility is to create opportunities for participants to express their current understanding and, subsequently, to address and correct any misunderstandings or errors.

Here's what that looks like in practice:

Scenario: Teaching automation workflows.

Bad (tutorial) approach: "Here's how to set up a trigger. Click here, select 'form submission,' then add your filter criteria like this..."

Good (cohort) approach: "You've got a dental office that wants to send a different confirmation email depending on whether it's a new patient or a returning patient. Talk to your neighbor—how would you structure that workflow? You have 6 minutes."

What happens in those 6 minutes?

Person A says: "I'd use two separate forms, one for new patients and one for returning."

Person B says: "Wait, couldn't you use one form with a dropdown field and filter based on the selection?"

Person A pauses. "Oh. Yeah, that's... simpler. But how do you filter on a form field?"

Abrakadabra . . . learning.

Person A just learned:

Their initial mental model (separate forms) works, but is inefficient

A better approach exists (single form with conditional logic)

They see that they have a knowledge gap (don't know how to implement field-based filtering)

Person B just learned:

Their approach is valid (social validation)

By explaining it to Person A, they'll consolidate their own understanding (more on this in a moment)

The facilitator just learned:

Person A understands triggers but doesn't know filters

Person B is ready for more advanced conditional logic

The whole group needs a deep dive into the filters next

This is what the research calls schema revelation through dialogue. By requiring participants to articulate their thinking, you surface misconceptions before they get baked into practice.

This is where real learning happens.

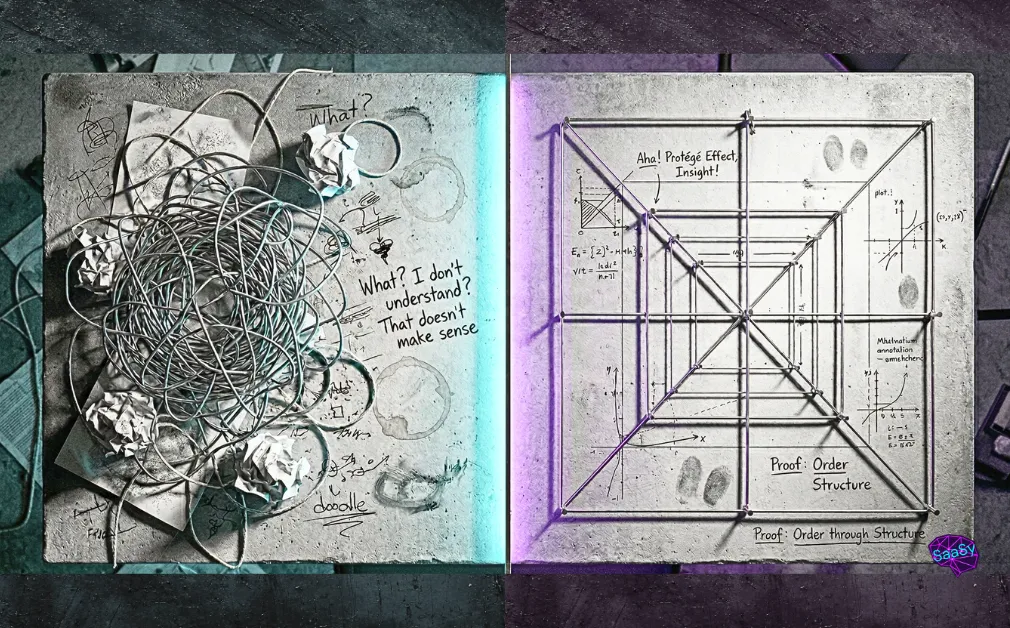

The Protege Effect: Why Peer Teaching Increases Retention

Now, Dear Reader, let us introduce you to the Protege Effect.

When you teach something, your brain processes knowledge differently.

Organize information hierarchically (What's foundational? What's advanced?)

Identify and fill your own knowledge gaps (I can't explain what I don't understand)

Generate examples and analogies (How do I make this concrete for someone else?)

Engage in metacognitive monitoring (Do I actually understand this?)

Knowing something to teach it is vastly different than knowing something to do it.

This is why in our Insight Cohorts, peer teaching isn't optional—it's inevitable.

Those who participate in our cohorts and work through the self-paced exercises will soon realize that completion is not optional. You will understand it is your responsibility to add value to the group. The group is counting on you to have done the work. The result is that you will have invested the mental capital required to understand how to teach this to someone else. Your competence will skyrocket.

Your motivation to learn, and your brain's focus on the task increase by orders of magnitude.

You will find that in every SaaSy Brainformative cohort, everyone will play both roles: teacher and student.

Those of you who participate in our Minimum Viable System Founders Cohort will certainly see this line in your Pre-Cohort email: “Come prepared to explain . . .”

PART 3: WHAT THIS LOOKS LIKE IN PRACTICE

Okay. Enough theory. Let's talk about what schema-building facilitation looks like.

The Facilitator's Three Core Moves

In a cohort of 8-24 people, the facilitator isn't lecturing. They're orchestrating. And they're using three specific moves on repeat:

Move 1: Redirect (Socratic Probing)

A participant says, "I can't get the webhook to fire."

Good response: "Walk me through what you expected to happen when you submitted that test form."

What's happening here?

The response forces the participant to externalize their mental model. As they explain what they expected, they'll often catch their own error: "Oh, wait, I never set up the trigger in the first place."

Even if they don't catch it, their explanation reveals where their understanding breaks down.

Move 2: Refine (Building Connections)

A participant successfully builds a simple workflow: form submission → trigger → send email.

Good response: "Nice. Before we move on, Jamie, you built something similar last week with a different trigger. How does Sarah's approach compare to yours?"

The good response forces connection-building. By asking Jamie to compare approaches, you're:

Reinforcing Jamie's learning (retrieval practice)

Giving Sarah a second perspective (elaboration)

Revealing underlying patterns (both solutions use event → action structure)

This is how you build transferable mental models instead of memorized procedures.

Example continuation:

Jamie: "I used a calendar booking as my trigger instead of a form, but yeah, same basic structure."

Facilitator: "Exactly. So what's the common pattern here? What's the 'Thing' that makes any workflow work, regardless of the trigger type?"

Someone in the group: "An event happens, and you define what action to take in response?"

Facilitator: "Yes. That's the fundamental logic of automation: Event → Condition → Action. Once you understand that pattern, you can build any workflow. The specific trigger—form, calendar, webhook, whatever—is just plugging different event types into the same structure."

Move 3: Reframe (Normalizing Struggle)

Participant: aggravated to the point of cussing under their breath. “I can’t get logged in!”

Good response: "I can tell you are frustrated right now. This is a new workflow for you, but look at the URL you’ve typed in.”

Participant: “But it doesn’t work!”

Good response: “I know you are frustrated, but I need you to pause. Now... say exactly what is there.” Pointing.

Participant: “login dot mirosft dot . . . oh. I see.”

Frustration, confusion, and even anger are predictable phases of learning complex material. They're not signs of failure—they're signs that their amygdala is doing its job. Humans hate change.

Hate.

HATE!

Humans hate change because the brain sees change as an existential threat. Learning new things, particularly for adults who have already earned proficiency in many things, is now confronting the pain of being a novice.

The facilitator's job is to reframe that pain toward a productive state.

Learning necessitates the brain choosing to revise its schema. But “being wrong” is tantamount to an existential threat. Depending on the depth and impact of that schema change, this can be excruciating.

PART 4: WHY NOBODY TEACHES LIKE THIS (Even Though The Data Is Clear)

Two reasons: Legacy and Economics

Legacy: From Medieval Origins to Industrial Systematization

The Sage on the Stage, Lecture-Listener model is so endemic to education that most people don’t know that any other method is possible.

Think of every classroom you’ve entered. From primary school to university to boardroom, they are all oriented toward one focal point, with the expectation that those in the audience will sit while the expert talks and talks and talks and talks and talks and talks and talks and talks....

Western culture has spent trillions of dollars building edifices to replicate a model that dates back to the 11th century. The Lecture-Listener format began in Medieval European universities: the University of Bologna (founded 1088) and the University of Paris (founded 1160).

The only noticeable change came with the Industrial Revolution. But it didn’t change how education was delivered, only how students were organized. We turned education into a factory with age-based levels, standardized curricula, and seat time requirements and bell schedules. Human learning was turned over to behaviorist conditioning.

The model for knowledge transfer has remained largely unchanged for nearly a millennium. This persistence in a technological era is comparable to 21st century physicians resorting to bloodletting with leeches. While leeches have valid medicinal uses, if a doctor's sole treatment was to bleed the sick, you would certainly stay away.

Don't be misled by the rise of “learning” technologies (LMS, Content Authoring tools, Multimedia creation tools, web conferencing, et al.) into thinking that these represent an appreciable change.

Whenever we at SaaSy Brainformative are asked which technologies are best for learning, we say none.

Technology does not learn; people learn. Chalk and slate are technologies used for teaching. Pen and paper are technologies used for teaching. These technologies were used for hundreds of years to great effect. Don’t be dazzled by the modern “technological” versions. At the root, technology is merely a tool to augment the primary outcome: human learning.

(In a future article, we will address the rise of AI and its role in human education, but for now, the point is made: “technology” is not a learning magic bullet.)

While some technologies improve how content can be assembled and distributed, all of them suffer from the same root issue: they have all inherited our tell, tell, tell, tell, teaching model. Therefore, innovations are largely irrelevant because they are still used to perpetuate the legacy model.

A lecture remains a lecture, whether delivered by a medieval monk or a contemporary YouTube instructor. The core dynamic—one person speaking at length while others passively listen—is unchanged.

The internet didn't fix this—it made it worse.

Now the logistical constraint (physical space) is gone, so we scaled the broken model to infinity. MOOCs are just lecture halls without walls. And we act surprised when they produce the same mediocre outcomes, just at greater volume.

Reason 2: The Economics of Infinite Margin

The MOOC Investor pitch:

"We record content once. We can sell it to 10 people or 10,000 people—same cost structure. Gross margin approaches 100% at scale."

That's catnip for venture capital.

Udemy, Coursera, and Lynda.com (Now LinkedIn Learning) are not evil. The people who built those platforms were being rational actors within a broken incentive structure. If your business model rewards headcount over outcomes, you build for headcount.

And it doesn’t get any better in the corporate boardroom.

Before COVID, corporations were spending roughly 29 billion dollars on brick-and-mortar training.

This time frame was during the early stages of Brainformative’s Insight Design delivery, and the pressure to put our methodology “online” was enormous, even though we could demonstrate better results (by orders of magnitude) with our brick-and-mortar cohort structure.

We often pointed out that if cost was the concern, bad training was too expensive to consider. Our pushback was to no avail. The C-suite saw abolishing in-person training in favor of "technology" as a major corporate windfall.

COVID accelerated the trend—replacing in-person training with video libraries while extoling its virtues as 'digital transformation.'

The global lockdown was certainly awful, but at least now everyone has experienced the excruciating pain of endless video tutorials and subsequently proved that MOOC’s don’t work.

PART 5: THE ARTICLE YOU JUST READ WAS A COHORT OF ONE

Here’s the problem with this article. You read it alone.

You couldn't turn to the person next to you and say, "Wait, I don't understand why mirror neurons matter here."

You couldn't watch someone else struggle with the schema concept and have that lightbulb moment when they finally get it.

You couldn't explain the Protégé Effect to someone who's still confused about it—which means you didn't get the retention boost that comes from teaching.

This article explained the theory of why 8-24 peer-based learning works, but it couldn't give you the Insight Design experience.

Reading about a concept activates different neural pathways than using a concept in conversation

Passively consuming information creates the fluency illusion; actively teaching it creates durable memory

Individual study works for memorization; social learning works for application

This article gave you information. An Insight Design-based cohort would give you competence.

Welcome to SaaSy Brainformative

As Promised:

Edmunds, S., & Brown, G. (2010). Effective small group learning: AMEE Guide No. 48. Medical Teacher.

Burgess, A., et al. (2020). Facilitating small group learning in the health professions. BMC Medical Education.

Hübscher-Younger, T., & Narayanan, N.H. (2003). Authority and convergence in collaborative learning (or the 2002 ISLS proceedings version).

For broader cooperative learning effects: Springer, L., et al. (1999). Effects of small-group learning on undergraduates in SMET: A meta-analysis. Review of Educational Research.